Network support:

Transmission control protocol windowing

For a quick explanation of transmission control protocol (TCP) windowing, watch the video below, after it we go into more detail and provide examples.

TCP keeps track the amount of data allowed to be sent at a given point; this is dictated by the host receiving the data, the host sending the data and the conditions of the network.

There are three TCP windows used in a TCP connection:

- Receive Window (RWIN)

- Send Window (SWIN)

- Congestion Window (CWIN)

Each window serves an important purpose for the flow of data between the TCP sender and TCP receiver. Here they are referred to as sender and receiver, in practice they are usually referred to as client and server. The reason for this is that data can flow in either direction client to server or server to client.

The RWIN dictates how much data a TCP receiver is willing to accept before sending an acknowledgement (ACK) back to the TCP sender. The receiver will advertise its RWIN to the sender and thus the sender knows it can’t send more than an RWINs worth of data before receiving and ACK from receiver.

The SWIN dictates how much data a TCP sender will be allowed send before it must receive an ACK from the TCP receiver.

The CWIN is a variable that changes dynamically according to the conditions of the network. If data is lost or delivered out-of-order the CWIN is typically reduced.

The RWIN and SWIN are configurable values on the host, while the CWIN is dynamic and can’t be configured.

The TCP window (RWIN/SWIN) is analogous to the number of seats on an airplane; the number of people filling the seats is analogous to bytes. Let’s assume an airplane has 200 seats and its flying from New York to San Francisco which takes 6 hours or 12 hours there and back; 12 hours being our RTT (Round-Trip Time). It’s a mid afternoon flight so only 50 seats were filled. If we assume the plane is only allowed to transport people in one direction and it must return to New York before transporting more people we can get our hourly average of people per hour.

In this case we are transporting 50 people every 12 hours; the airplane must fly from New York to San Francisco and back, 50 people/12 hours equals ~4 people/hour.

If the airline sells every seat then the plane is transporting 200 people/12 hours giving us an average of ~16 people/hour. It’s easy to see that the more seats that are filled the more efficient the plane trip will be.

TCP works the same way; to get the best possible performance out of TCP the goal is to fill the TCP window whenever possible. In the analogy we talked about transporting people per hour, in TCP terms this is bytes over time.

Let’s say we have purchased a 100 Mbps Ethernet circuit and we want to get the best possible performance for a file transfer between NY and CA. Our RTT is 80ms. Using the committed information rate (CIR) of 100 Mbps and the RTT of 80ms we can calculate the window size needed to get the best possible performance. The equation is known as the bandwidth delay product (BDP).

100,000,000 bits/sec/8 = 12,500,000 bytes/sec

80ms/1000 = .08 seconds

12,500,000 bytes/sec * .08 seconds = 1,000,000 bytes = 1 MB

To fill up the pipe (100 Mbps) we must use a TCP window of 1 MB given a RTT of 80ms.

The TCP window uses 16 bits. This means the maximum size of the TCP window is 65,536 bytes (216). This poses a problem; to fill up the 100 Mbps pipe over a path with 80ms of latency we must use a TCP window of 1 MB, but the TCP window can only grow to 65 KB. It’s easy to see that in order to fill the pipe one of two things must happen; either the latency must be reduced or the TCP window size must be increased. In this case our latency is a fixed variable and we can’t lower it; our only option is to increase the TCP window size. Since we can’t modify the TCP window field in the TCP header a different solution is needed.

The answer to this is TCP windowing scaling defined in RFC 7323. With window scaling we can use a shift factor from 0 - 14. The shift factor is a multiple of two. In the examples below it’s always multiplied by 65,536 this is just done for illustration purposes. In an actual TCP connection the TCP window will be a multiple of the maximum segment size (MSS), which is typically 1448/1460 bytes.

Shift Factor of 0 = 65,536 bytes * 20 = 65,536 bytes

Shift Factor of 1 = 65,536 bytes * 21 = 131,072 bytes

Shift Factor of 2 = 65,536 bytes * 22 = 262,144 bytes

…

Shift Factor of 12 = 65,536 bytes * 212 = 268,435,456 bytes

Shift Factor of 13 = 65,536 bytes * 213 = 536,870,912 bytes

Shift Factor of 14 = 65,536 bytes * 214 = 1,073,741,824 bytes

We can see that without window scaling, or using window scaling with a shift factor of zero, the calculated TCP window is 65,536 bytes. By using window scaling with a shift factor of 14 we can use a maximum calculated TCP window of ~1 GB.

TCP window scaling is part of the TCP header, specifically in the TCP options. It’s only advertised during TCP’s three-way handshake in the SYN and SYN/ACK packets. If TCP window scaling isn’t advertised in the TCP three-way handshake then there is no option to advertise it later no during the TCP connection.

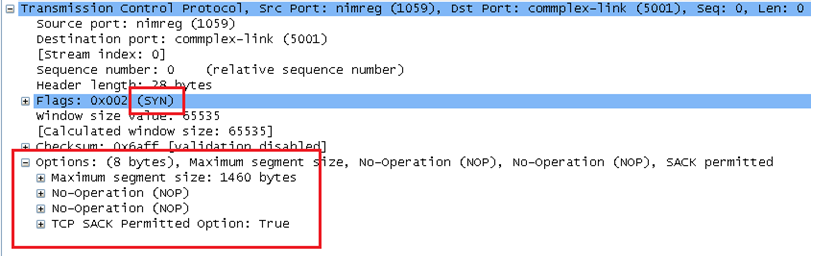

Example of a TCP SYN packet without window scaling:

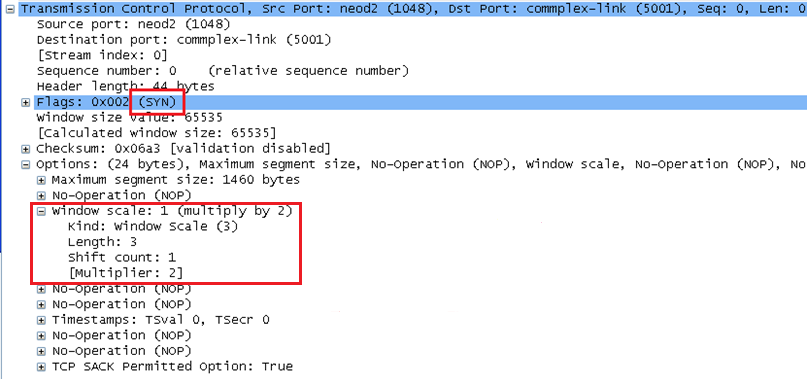

Example of a TCP SYN packet with window scaling:

TCP window scaling can be enabled in both Linux and Windows distributions:

FreeBSD

sudo sysctl net.inet.tcp.rfc1323=1

> sysctl -a net.inet.tcp | grep 1323

net.inet.tcp.rfc1323: 1

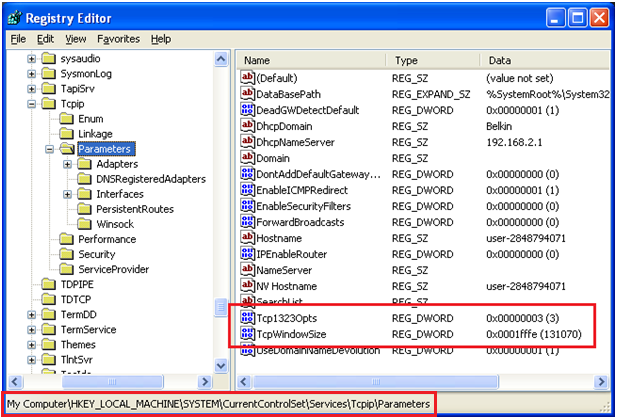

Example of enabling TCP window scaling in Windows XP

Adding a registry entry of Tcp1323Opts with a value of 3 enables TCP window scaling as well as TCP timestamps.

The registry entry is entered under the hierarchy of:

\HKEY|LOCAL_MACHINE_SYSTEM\CurrentControlSet\Services|Tcpip\Parameters

The registry entry of TcpWindowSize allows you to specify the size of the RWIN. In this case, 131070 bytes.

Example of using FileZilla to test TCP performance

- The round-trip time in this instance was 39ms or .039 seconds

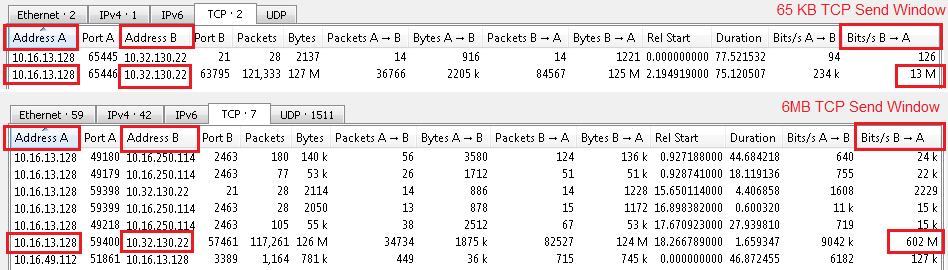

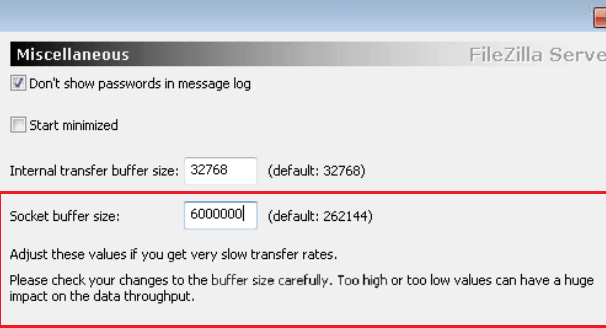

- The first image showing with a 65 KB TCP send window (socket buffer size) the throughput is 13Mbps, with a 6MB TCP send window the throughput increases to 602Mbps

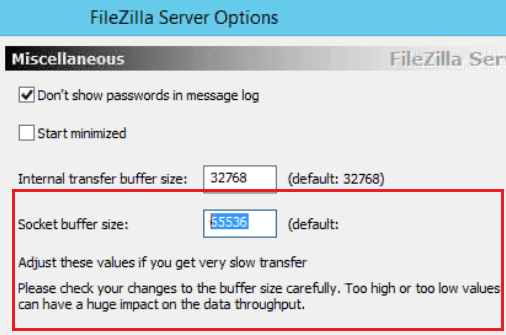

- The second image shows where this is changed – in the FileZilla Server options – Under Miscellaneous

- Calculations for maximum theoretical throughput (BDP):

- ((65,535 bytes / .039 seconds) * 8) / 1,000,000 = ~ 13Mbps

- ((6,000,000 bytes / .039 seconds) * 8) / 1,000,000 = ~1230Mbps

- A TCP send window of 65KB is too low for this path as it only permits a maximum of 13Mbps. A larger TCP send window such as 6MB allows for much higher throughput with a maximum of over 1Gbps. In this case the test reached 602Mbps after modifying the TCP send window (Socket buffer size) in the FileZille settings. This is one example of how the application can override the operating system’s TCP windowing settings.